In one of our previous articles “SQL Server 2014 – InMemory Table, Indexes and Stored Procedures”, we saw how to create in-memory tables and indexes. We also saw how to use stored procedures to interact with these tables.

In-memory tables are capable of storing multi-versions of a rows. When you perform transactions on these tables, internally it uses snapshot – based transaction isolation. In memory optimized tables, we always assume that the transactions will optimistically commit the operations.

As we don’t have locks, we don’t have blocking mechanism in memory optimized tables. Given these points, a question always arises that what if there are conflicts. For example, we have two transactions. The first transaction tries to write a row and the same row is getting written by another transaction as well. Then these conflicts are detected and the second transaction will always fail.

In the scenario of two transactions, you can read the old version of the row in case a transaction is occurring on the row. But if the row is not available, then the transaction simply fails.

There are number of Isolation Levels supported by transactions as described below –

· Snapshot Isolation – In this level of isolation, the starting reads are consistent and writes are always consistent.

· Repeatable Read – In this level of isolation, we have guarantee that the rows which we read during the start of the transaction, are the same at the end of the transaction. Other transaction will not be able to change them.

· Serializable – In this isolation level, if you scan the entire table at the end of the transaction before commit to check, if the new row is inserted, then the transaction will fail.

Consider few guide lines while working with Memory Optimized Table Transactions –

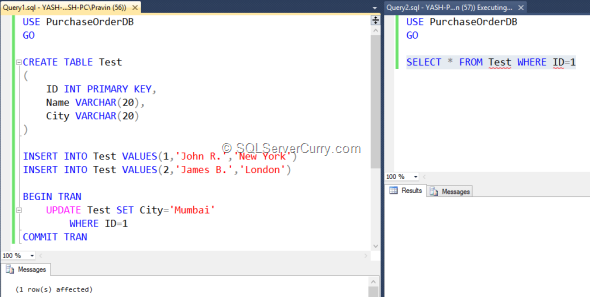

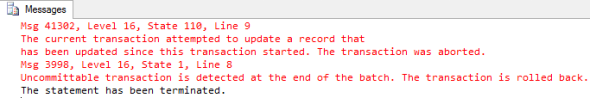

Let’s open SQL Server Management Studio and write the below script.

In the script, the query pad 1 contains a T-SQL command which is creating a table with the name Test. We have inserted two rows in the table. We then start a transaction which will update the row from the Test table which has ID=1. We are updating the row without committing it. In the second query pad, we try to access the row which is under the transaction and hence you will not see the row unless the first transaction is either committed or rollback.

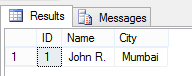

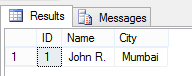

Try committing the transaction and you will see the updated row in your second query pad as shown below –

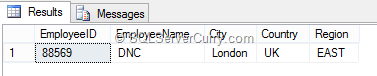

Now let’s compare the same with Memory Optimized table which we have created in the previous article. Let’s write the following script in Query pad 1 –

Now update the above row and change the city from London to New York but do not commit it as shown below –

BEGIN TRAN

UPDATE Employees WITH (SNAPSHOT)

SET City='New York'

WHERE EmployeeID = 88569

COMMIT TRAN

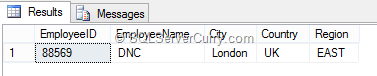

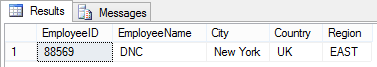

Now let’s try accessing the updated row from our second query pad and see the output. You will see the older version of row as shown below –

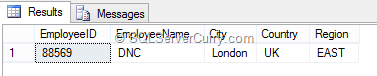

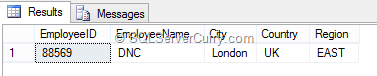

Commit the transaction and you will see the updated row as shown below –

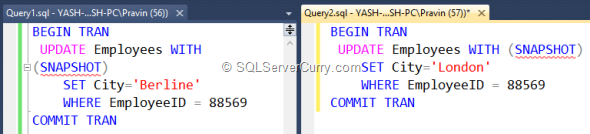

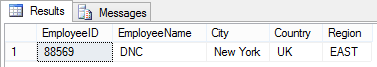

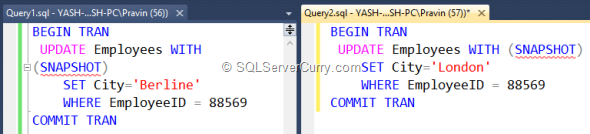

Let’s update the city from our first query pad without committing it. And then try to update the same row from our second query pad and then we will see the difference –

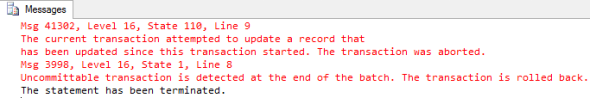

The output of the second query pad transaction execution is as shown below. The error says – The current transaction attempted to update a record that has been updated since this transaction…

The above error clearly says that the row which the current transaction attempted to update cannot commit the transaction as the row has been modified outside since this transaction started.

So, in this situation, the second transaction will always fail. We can also write a retry logic which can validate the row and then modify it.

Summary – In this article we have seen how to implement a transaction with memory optimized tables in SQL Server 2014.

In-memory tables are capable of storing multi-versions of a rows. When you perform transactions on these tables, internally it uses snapshot – based transaction isolation. In memory optimized tables, we always assume that the transactions will optimistically commit the operations.

As we don’t have locks, we don’t have blocking mechanism in memory optimized tables. Given these points, a question always arises that what if there are conflicts. For example, we have two transactions. The first transaction tries to write a row and the same row is getting written by another transaction as well. Then these conflicts are detected and the second transaction will always fail.

In the scenario of two transactions, you can read the old version of the row in case a transaction is occurring on the row. But if the row is not available, then the transaction simply fails.

There are number of Isolation Levels supported by transactions as described below –

· Snapshot Isolation – In this level of isolation, the starting reads are consistent and writes are always consistent.

· Repeatable Read – In this level of isolation, we have guarantee that the rows which we read during the start of the transaction, are the same at the end of the transaction. Other transaction will not be able to change them.

· Serializable – In this isolation level, if you scan the entire table at the end of the transaction before commit to check, if the new row is inserted, then the transaction will fail.

Consider few guide lines while working with Memory Optimized Table Transactions –

- Always declare which Isolation Level you want you as described above.

- In case of transaction failure, try writing logic for handling conflicts and validations in your transactions.

- Try avoiding long running transactions.

Let’s open SQL Server Management Studio and write the below script.

In the script, the query pad 1 contains a T-SQL command which is creating a table with the name Test. We have inserted two rows in the table. We then start a transaction which will update the row from the Test table which has ID=1. We are updating the row without committing it. In the second query pad, we try to access the row which is under the transaction and hence you will not see the row unless the first transaction is either committed or rollback.

Try committing the transaction and you will see the updated row in your second query pad as shown below –

Now let’s compare the same with Memory Optimized table which we have created in the previous article. Let’s write the following script in Query pad 1 –

Now update the above row and change the city from London to New York but do not commit it as shown below –

BEGIN TRAN

UPDATE Employees WITH (SNAPSHOT)

SET City='New York'

WHERE EmployeeID = 88569

COMMIT TRAN

Now let’s try accessing the updated row from our second query pad and see the output. You will see the older version of row as shown below –

Commit the transaction and you will see the updated row as shown below –

Let’s update the city from our first query pad without committing it. And then try to update the same row from our second query pad and then we will see the difference –

The output of the second query pad transaction execution is as shown below. The error says – The current transaction attempted to update a record that has been updated since this transaction…

The above error clearly says that the row which the current transaction attempted to update cannot commit the transaction as the row has been modified outside since this transaction started.

So, in this situation, the second transaction will always fail. We can also write a retry logic which can validate the row and then modify it.

Summary – In this article we have seen how to implement a transaction with memory optimized tables in SQL Server 2014.

No comments:

Post a Comment